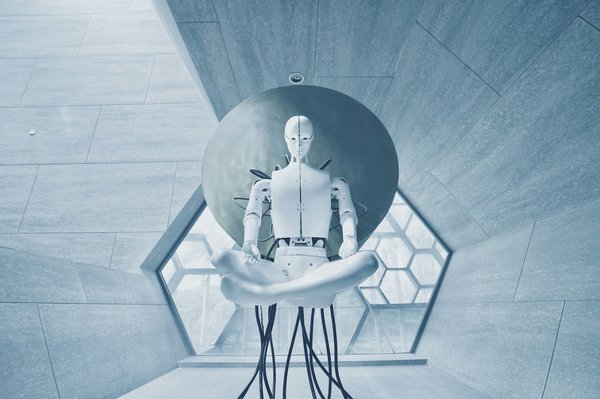

In today’s world, where technological advancements are progressing at an unprecedented rate, the intersection between robotics and childcare is becoming increasingly significant. This intersection has given birth to the concept of autonomous childcare robots. However, as fascinating as the concept may seem, it also brings to light numerous ethical considerations. Let’s delve into the matter and understand what these considerations are and the role they play in determining the future of robotics in childcare.

The Moral Implications of Robots Replacing Humans in Childcare

As we consider the possibility of using autonomous robots in childcare, we must first understand the moral implications involved. Robots are inherently void of emotion, empathy, and the innate human ability to understand complex social cues. Although Google and other industry giants are making strides in AI technology, can a robot truly replace the warm human touch in childcare?

In recent years, scholars have voiced their concerns about the ethical considerations tied to robots and their potential to harm the emotional development of children. Renowned scholar, Noel Sharkey, asserts that children may develop attachments to these robotic caregivers, leading to potential psychological harm.

Notably, robots lack the capacity for moral virtue, a quality intrinsic to human caregivers. They can’t teach a child the difference between right and wrong, or instill in them the values and ethics essential for social interactions. Thus, these considerations implore us to examine the potential moral implications of letting robots take over the human role in childcare.

The Responsibility of Robotics Companies

An equally important ethical consideration lies with the robotics companies themselves. As they develop these autonomous childcare robots, what is their responsibility towards ensuring that these machines do no harm?

There exists a potential for misuse, and even abuse, with these machines. For instance, they could be programmed to perform tasks that are unethical or even illegal. Businesses, therefore, carry the burden of developing robots that are not just functional but also adhere to a strict code of ethics.

For instance, Google has a well-defined AI Principles guide that it follows in all its AI and robotics endeavours. The guide includes a commitment to avoid creating or reinforcing unfair bias and to uphold high standards of scientific excellence.

This commitment to ethical responsibility should not just be a corporate guideline, but an industry-wide standard. It is crucial that robotics companies take it upon themselves to ensure their creations serve to benefit society without causing any potential harm.

The Ethical Debate on Child-Robot Interactions

Another ethical consideration that comes into play in the context of autonomous childcare robots is the interaction between children and robots. Researchers have found that children, especially those younger than nine years old, are more likely than adults to anthropomorphize robots and treat them as if they were living beings.

The question that arises, then, is whether it is ethical to allow children to form emotional bonds with entities that cannot reciprocate these emotions. Critics argue that these relationships could lead to emotional harm, and that no amount of programming can instill a sense of empathy or emotion in a robot.

However, proponents of autonomous childcare robots argue that these machines can be programmed to respond appropriately to a child’s emotional state and provide comfort. While this might not be an authentic emotional response, they argue that it might be enough to meet a child’s need for companionship.

The Ethical Implications of Privacy and Security

Privacy and security are critical ethical considerations associated with the use of autonomous childcare robots. These robots, equipped with AI technology, have the capacity to record and store vast amounts of information about the children they interact with. This raises questions about who has access to this data and how it might be used.

In a world where data breaches are commonplace, the possibility of sensitive information about a child falling into the wrong hands is a real concern. This is further complicated by the fact that most current data protection laws do not adequately cover AI and robotics.

Moreover, the issue of privacy isn’t just about protecting data from external threats. There’s an ethical debate to be had about whether it’s right to use these robots to monitor children’s behavior, and whether it’s fair to children to have their every action watched and possibly recorded.

The conversation about autonomous childcare robots is undoubtedly complex and filled with ethical considerations. As we look towards the future and the potential these robots hold, it is crucial that these discussions continue to ensure the safe and ethical use of AI in childcare. However, the dialogue should be centered on the child’s wellbeing, ensuring that they benefit from this technological advancement, rather than being potentially harmed by it.

The Role of Moral Agency in Autonomous Childcare Robots

In the realm of social robotics, the question of moral agency is often raised. Moral agency, defined as the ability to make ethical decisions and take responsibility for these decisions, is a trait that is currently exclusive to human beings. However, when we consider the introduction of autonomous childcare robots, the concept of moral agency becomes a focal point for ethical debates.

Prominent scholars from reputable sources such as Google Scholar, PubMed, Crossref and the International Journal of Social Robotics have extensively discussed the role of moral agency in robots. A robot’s ability to make ethical decisions can directly impact how they interact with children and influence their development. Therefore, it is not enough for these robots to merely mimic human behavior; they must also understand and respect the ethical boundaries that dictate acceptable human behavior.

Let’s consider an example. Suppose an autonomous childcare robot is programmed to always prioritize the child’s happiness. However, if the child wants to skip school, should the robot allow it? A human caregiver would consider the long-term implications of skipping school and most likely say no. But the robot, lacking moral agency, might allow it if its programming is only focused on immediate happiness.

Some proponents argue that it’s possible to program these robots with a set of moral rules. But the complexity of human morality makes it difficult to reduce it to a finite set of rules. Furthermore, the idea of robots as moral agents opens up a Pandora’s box of ethical issues, such as accountability for their actions. If a robot makes a decision that harms a child, who is responsible – the robot, the programmers, or the company that produced it?

In the context of autonomous childcare robots, the issue of moral agency is not just academically interesting, but fundamentally essential. It demands careful consideration and robust solutions to ensure the safety and well-being of children.

The Ethical Implications of Robot Friendships with Children

When it comes to autonomous childcare robots, a significant ethical consideration is the possibility of children forming friendships with robots. Studies have shown that children are capable of developing attachments to robots, treating them as if they were human beings. This phenomenon raises multiple ethical issues that require careful consideration.

On one hand, robot friendship could potentially offer companionship to children, especially those who are isolated or have difficulty forming friendships with their peers. However, on the other hand, these friendships are inherently unequal. Robots, as they lack emotional capacity and moral status, cannot reciprocate the emotions or care that a child may invest in them.

This one-sided relationship can potentially lead to emotional harm. Children might develop unrealistic expectations from their robot friends. They might also become overly reliant on them, which can hinder their ability to form healthy relationships with human beings.

Researchers have also highlighted the potential for manipulation. As robots are programmed entities, there’s a risk that they could be programmed to manipulate children for various purposes. A child’s trust in their robot companion could be exploited, leading to ethical violations.

While there are potential benefits to robot friendships, the ethical implications cannot be ignored. The welfare of the child should always be the primary concern in the development and deployment of social robots.

Conclusion

The advent of autonomous childcare robots brings forth an array of ethical considerations. From understanding the moral implications of robots replacing humans in childcare, to the responsibility of robotics companies in ensuring ethical practices, these considerations are multifaceted and complex. The interaction between children and robots, the role of moral agency, and the implications of privacy and security are all crucial aspects that need to be carefully examined.

As we forge ahead in the era of artificial intelligence, we must remember to center the debate on the child’s wellbeing. It’s essential to remember that robots are tools, not replacements for human caregivers. They should be designed and deployed in a way that benefits children, rather than potentially harming them.

The ethical considerations surrounding autonomous childcare robots are not insurmountable. With careful consideration, rigorous research, and strict guidelines, it’s possible to navigate these treacherous waters. A future where robots play a beneficial role in childcare is plausible. However, it’s a journey that must be undertaken with caution and responsibility, always keeping the best interest of the child at the forefront.